Circuit Breaker Pattern: Building Resilient Applications

The circuit breaker pattern is a resilience design pattern that prevents cascading failures in distributed systems. It monitors service failures and opens the circuit to stop requests when failures exceed a threshold, allowing the system to recover.

Circuit Breaker Pattern: Building Resilient Applications

The circuit breaker pattern is a resilience design pattern that prevents cascading failures in distributed systems. Inspired by electrical circuit breakers, it stops the flow of requests to a failing service, giving it time to recover while protecting the rest of the system from being dragged down. In modern microservices architectures, where a single request may depend on dozens of services, a single slow or failing service can cause widespread outages without proper protection.

When a service becomes slow or unresponsive, traditional applications continue sending requests, leading to resource exhaustion, connection pool depletion, and cascading failures that spread across the entire system. The circuit breaker pattern detects these failures and opens the circuit, failing fast and preventing further damage. To understand this pattern properly, it is helpful to be familiar with microservices architecture, retry pattern, and timeout pattern.

What Is the Circuit Breaker Pattern

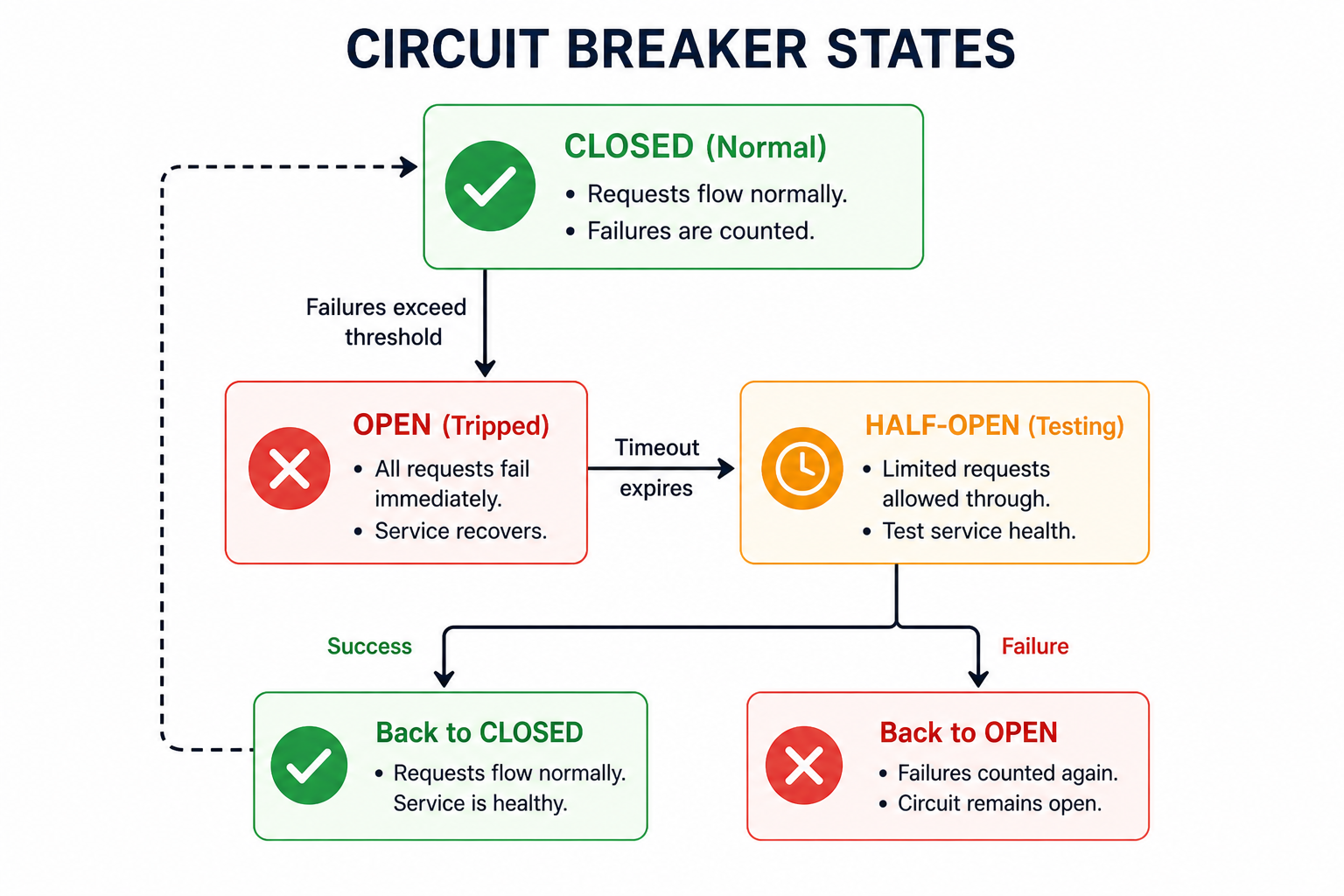

The circuit breaker pattern is a design pattern that monitors for failures and prevents repeated attempts to invoke a failing service. When the number of failures exceeds a configurable threshold, the circuit breaker trips and opens the circuit. Subsequent requests fail immediately without attempting the call, allowing the failing service time to recover.

After a timeout period, the circuit breaker transitions to a half-open state, allowing a limited number of test requests to pass through. If these succeed, the circuit closes again. If they fail, the circuit reopens and the timeout period resets.

- CLOSED (Normal): Requests flow normally. Failures are counted. When threshold reached, circuit opens.

- OPEN (Tripped): All requests fail immediately without attempting the call. After timeout, circuit becomes half-open.

- HALF-OPEN (Testing): Limited requests allowed through. Success closes the circuit; failure reopens it.

Why the Circuit Breaker Pattern Matters

In distributed systems, failures are inevitable. Services crash, networks partition, and latency spikes occur. Without circuit breakers, a single failing service can trigger cascading failures that bring down entire systems.

- Prevent Cascading Failures: Stop failures from propagating across services.

- Fast Failure: Fail immediately when a service is known to be unhealthy, avoiding long timeouts.

- Automatic Recovery: Allow failing services time to recover without manual intervention.

- Resource Protection: Prevent thread pools, connection pools, and memory from being exhausted.

- Improved User Experience: Return graceful fallback responses instead of hanging or crashing.

- Reduce Load on Failing Services: Give overwhelmed services time to recover by reducing request volume.

How the Circuit Breaker Works

1. CLOSED State

In the closed state, the circuit breaker allows all requests to pass through to the protected service. It monitors the outcome of each request, tracking failures such as exceptions, timeouts, and HTTP 5xx errors. When the failure count exceeds a configured threshold within a time window, the circuit breaker transitions to the open state.

| Configuration | Description | Example |

|---|---|---|

| failureThreshold | Number of failures before opening the circuit | 5 failures |

| timeWindow | Time period over which failures are counted | 30 seconds |

| requestVolumeThreshold | Minimum requests before failure threshold is considered | 20 requests |

2. OPEN State

In the open state, the circuit breaker immediately fails all requests without attempting to call the downstream service. This prevents the application from wasting resources on calls that are likely to fail. The circuit remains open for a configured duration called the sleep window, giving the failing service time to recover.

During this state, the circuit breaker typically returns fallback responses or throws exceptions immediately. The timeout period should be long enough for the downstream service to recover but not so long that the application remains degraded unnecessarily.

3. HALF-OPEN State

After the sleep window expires, the circuit breaker transitions to the half-open state. In this state, it allows a limited number of test requests to pass through to the downstream service. If these requests succeed, the circuit breaker transitions back to closed, restoring normal operation. If any test request fails, the circuit reopens, and the sleep window resets.

| Configuration | Description | Example |

|---|---|---|

| sleepWindow | Time circuit stays open before transitioning to half-open | 60 seconds |

| permittedCallsInHalfOpenState | Number of test requests allowed in half-open state | 5 requests |

Circuit Breaker with Fallback

A circuit breaker is most effective when combined with fallback mechanisms. When the circuit is open or the call fails, the application can return a cached response, a default value, or a graceful error message instead of failing completely.

Fallback strategies include returning cached data, returning default values, calling an alternative service, or returning a graceful error response with retry-after headers.

Metrics and Monitoring

Circuit breakers should be monitored to understand system health and detect problems early. Key metrics to track include circuit state (closed, open, half-open), failure rate (percentage of failed requests), request count (total requests through circuit breaker), and success rate (percentage of successful requests).

Monitoring tools like Prometheus, Grafana, or Datadog can collect these metrics and trigger alerts when a circuit opens. This allows operations teams to respond before user impact becomes severe.

Common Circuit Breaker Mistakes to Avoid

- Setting Thresholds Too Low: Opening the circuit after a few failures causes unnecessary outages. Use realistic thresholds based on normal failure rates.

- Setting Thresholds Too High: Circuits never open, defeating the purpose. Balance sensitivity with stability.

- No Fallback Mechanism: Without fallbacks, users see errors instead of graceful degradation.

- Not Monitoring Circuit State: Without monitoring, you cannot know when circuits are open or why.

- Using Same Circuit for All Dependencies: Different dependencies need separate circuit breakers with different configurations.

- Ignoring Timeout Configuration: Circuit breakers should work with timeouts; slow requests count as failures.

- Not Testing Recovery: Verify that circuits close properly when services recover.

Circuit Breaker Best Practices

- Configure Different Thresholds per Service: Critical services need more conservative thresholds than non-critical ones.

- Use with Timeouts: Always combine circuit breakers with timeouts to avoid hanging requests.

- Implement Fallbacks: Provide meaningful fallbacks for degraded responses.

- Monitor Circuit State: Alert when circuits open or close frequently.

- Test Failure Scenarios: Simulate service failures to verify circuit breaker behavior.

- Use Exponential Backoff for Retries: Combine with retry pattern for robust error handling.

- Isolate Circuit Breakers: Each downstream dependency should have its own circuit breaker.

| Service Type | failureThreshold | timeWindow | sleepWindow |

|---|---|---|---|

| Critical Payment Service | 2 failures | 10 seconds | 120 seconds |

| External API | 5 failures | 30 seconds | 60 seconds |

| Analytics Service | 10 failures | 60 seconds | 30 seconds |

Circuit Breaker vs Retry vs Timeout

| Pattern | Purpose | When to Use |

|---|---|---|

| Circuit Breaker | Stop calling failing services | When service is likely to continue failing |

| Retry | Repeat failed operations | When failure is transient, such as network glitch or temporary lock |

| Timeout | Limit waiting time | Always, to avoid hanging indefinitely |

These patterns are often used together. A typical combination is timeout plus retry plus circuit breaker: timeouts prevent hanging, retries handle transient failures, and circuit breakers prevent cascading failures when the service is genuinely down.

Frequently Asked Questions

- What is the difference between circuit breaker and retry?

Retry repeats failed operations, assuming failure is transient. Circuit breaker stops calling failing services, assuming failure will persist. Use retry for temporary issues such as network glitches or lock contention. Use circuit breaker for ongoing failures such as service down or overloaded. - When should the circuit breaker open?

When the failure rate exceeds a configured threshold within a time window. For example, 50 percent failure rate over 30 seconds with at least 20 requests. - How long should the circuit stay open?

Long enough for the downstream service to recover. For most services, 30 to 120 seconds is typical. Critical services may need longer; non-critical services may need shorter. - What is a fallback?

A fallback is an alternative response when the primary call fails or the circuit is open. Examples include cached data, default values, stale data, or a graceful error message. - Should I use a single circuit breaker for all downstream calls?

No. Each downstream dependency should have its own circuit breaker with its own configuration. A failure in one service should not affect calls to unrelated services. - What should I learn next after the circuit breaker pattern?

After mastering circuit breakers, explore retry pattern, bulkhead pattern, timeout pattern, and Resilience4j library for comprehensive resilience.

Conclusion

The circuit breaker pattern is an essential tool for building resilient distributed systems. By detecting failures and preventing cascading failures, it protects your application from being dragged down by unhealthy dependencies. When combined with timeouts, retries, and fallbacks, circuit breakers form the foundation of robust microservices architectures.

Implementing circuit breakers requires careful configuration. Thresholds that are too low cause unnecessary outages; thresholds that are too high provide no protection. Monitor circuit states, test failure scenarios, and adjust configurations based on real-world behavior. With proper implementation, circuit breakers dramatically improve system stability and user experience.

To deepen your understanding, explore related topics like retry pattern, bulkhead pattern, timeout pattern, and Resilience4j library for comprehensive resilience strategies.