Every software engineer knows the particular silence that follows a deployment when something has gone wrong but nobody has figured out what yet. The code passed every test. The staging environment showed no anomalies. The rollout proceeded exactly according to the runbook, each step completing without error. And still, somewhere in the labyrinth of production, a service is returning errors, a database query is timing out, or a user is staring at a spinner that never resolves. The gap between code that functions correctly in test environments and code that survives contact with actual production traffic has persisted for decades despite continuous investment in better tooling and more sophisticated testing methodologies.

We have built elaborate staging environments designed to mirror production as closely as possible given resource constraints and security requirements. We have written thousands of integration tests that verify service interactions under controlled conditions. We have embraced deployment strategies like canary rollouts and feature flags that limit the blast radius when failures inevitably occur. And yet the gap remains stubbornly present because all of these approaches share a fundamental limitation. They approximate production without actually replicating the conditions that cause production failures. A digital twin for software systems takes a different approach entirely, and while the concept originated in manufacturing and aerospace engineering, its application to distributed software is quietly reshaping how the most complex engineering organizations think about reliability and release confidence.

What a Digital Twin Actually Means When Applied to Software

The term digital twin entered the engineering lexicon through industries that build physical assets. Aerospace engineers constructing a jet engine would create a comprehensive virtual replica of that engine, a living model that evolved alongside its physical counterpart throughout the entire operational lifecycle. Sensors mounted on the actual engine would stream telemetry data into the twin continuously, allowing engineers to simulate wear patterns, predict component failures before they occurred, and test modifications in a risk-free virtual environment without ever touching the physical hardware that was busy keeping an aircraft safely in flight. The twin was not a static snapshot captured at a single moment during design. It was a dynamic simulation that breathed in lockstep with the real asset.

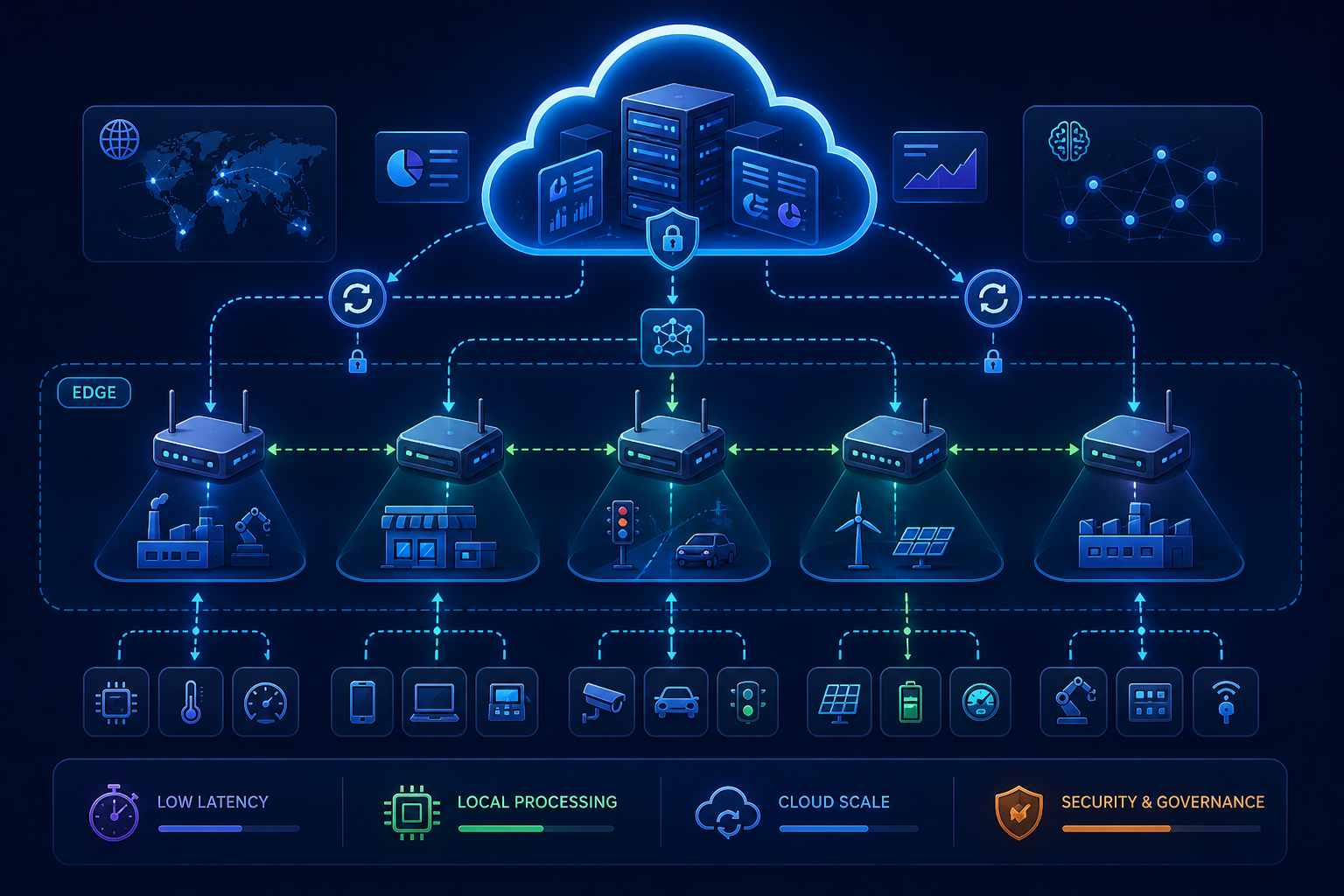

Translating this concept to software systems requires a shift in mental models but preserves the essential characteristics that made the approach valuable in the first place. A digital twin for a software system is not merely a copy of the codebase running on infrastructure that looks vaguely similar to production. It is a stateful, continuously updated simulation that behaves like production because it is fed by production data and subjected to production conditions in near real time. Every API request that traverses the live system can be duplicated and replayed against the twin without affecting actual users. Every database transaction, every cache interaction, every network boundary crossing can be modeled with sufficient fidelity to surface the edge cases and failure modes that traditional testing environments systematically miss.

Consider the implications of this approach for a moment. The twin runs the new version of the software, the release candidate that has not yet been exposed to customer traffic, while the production system continues serving users normally with the current stable version. Engineers observe precisely how the candidate behaves under actual load patterns, with actual data distributions, and with actual downstream service behaviors, all without a single user experiencing a regression or a single error budget being consumed. When a memory leak manifests only after six hours of sustained traffic at peak concurrency, the twin reveals it before deployment. When a subtle change to a database query performs acceptably against a million rows but degrades catastrophically against a hundred million, the twin surfaces the problem during simulation rather than during a production incident.

This is fundamentally different from the testing pyramid that has defined software quality practices for the past two decades. Unit tests verify that individual functions return expected outputs for known inputs, which is necessary but insufficient for distributed systems where emergent behavior arises from component interactions. Integration tests confirm that services can communicate across boundaries under idealized conditions, but they cannot replicate the partial failures and degraded states that characterize real production environments. Staging environments validate that configuration changes and deployment orchestration function correctly, yet they operate at reduced scale with sanitized data and cannot reveal how the system degrades when saturation occurs. A digital twin complements each of these practices by adding a dimension they collectively lack: faithful reproduction of production complexity at production scale with production data characteristics.

Staging and Digital Twins Serve Fundamentally Different Purposes

The distinction between staging environments and digital twins deserves careful examination because the terms are sometimes used interchangeably in ways that obscure important architectural and operational differences. Staging has served as the industry standard for pre-production validation for many years, and for good reason. It provides a controlled space where teams can verify that deployments succeed, configurations resolve correctly, and basic functionality operates before changes reach customers. But staging environments are built on assumptions that no longer hold for highly distributed, cloud-native systems operating at significant scale.

A staging environment is typically a scaled-down approximation of production infrastructure. It uses the same container images, the same configuration management, and often a sanitized subset of production data that has been scrubbed of sensitive information and reduced in volume to control costs. The objective is to create a safe environment where teams can validate changes without risking customer impact. The limitation is that scale itself is a fundamental property of system behavior that cannot be linearly extrapolated from small environments to large ones. A service that handles ten requests per second with consistent sub-hundred-millisecond latency may exhibit entirely different characteristics under ten thousand requests per second. Queue depths grow non-linearly. Garbage collection pauses become significant. Connection pool exhaustion emerges suddenly rather than gradually. Staging cannot reveal these behaviors because it does not operate at the scale where they manifest.

The data resident in staging environments introduces another category of blind spots. Privacy regulations and security policies rightly prevent staging environments from containing unmasked production data, so teams substitute anonymized data, synthetically generated data, or small samples extracted from production and stripped of identifiable attributes. This is prudent from a compliance perspective, but it means the system never encounters the weird edge cases hiding in real production data until those edge cases trigger incidents affecting actual users. The malformed strings that break parsing logic. The unexpected null values that cascade through object graphs. The timestamp patterns no synthetic generator thought to include. The character encodings that worked everywhere except one specific legacy client. These are precisely the inputs that cause production failures, and they are systematically excluded from staging environments.

A digital twin operates under a different set of design constraints and achieves different outcomes as a result. The table below captures the structural distinctions that matter most when evaluating which approach suits a given validation requirement.

| Dimension | Staging Environment | Digital Twin |

|---|---|---|

| Scale | Reduced capacity to control infrastructure costs; typically runs at a fraction of production traffic volume | Designed to simulate production-scale traffic patterns; can replicate peak concurrency and sustained load conditions |

| Data | Sanitized, anonymized, or synthetic data that protects privacy but loses statistical properties of real production data | Replayed production traffic with privacy controls; preserves structural complexity and edge case distributions |

| State | Reset periodically or maintained as a static snapshot; does not evolve continuously with production state changes | Maintains stateful simulation that mirrors production evolution; includes databases, caches, and message queues |

| Validation Model | Gated checkpoint before deployment; pass or fail determination based on predefined test suites | Continuous validation alongside production; detects regressions under specific traffic patterns as they emerge |

| Cost Profile | Fixed infrastructure cost regardless of utilization; often under-provisioned to meet budget constraints | Elastic resource allocation; scales up during simulation periods and scales down when idle to optimize spending |

| Failure Detection | Catches deployment and configuration errors; limited ability to surface performance degradation or resource exhaustion | Surfaces performance anomalies, memory leaks, and slow-burn failures that only manifest under sustained production load |

The operational philosophy underlying each approach differs as profoundly as the technical implementation. Staging functions as a gate that changes must pass through before proceeding toward production. The validation is binary and point-in-time. A digital twin supports continuous validation that runs in parallel with production operations. Because the twin mirrors production traffic in near real time, it can detect regressions at the exact moment they would begin impacting users, even when those regressions only emerge under specific traffic patterns that occur during off-peak hours or in response to particular user behaviors.

The cost dynamics also merit attention because they influence organizational adoption patterns. Maintaining a full staging environment that faithfully mirrors production infrastructure is expensive, and many organizations allow their staging capacity to lag behind production as a cost control measure. This creates a vicious cycle where staging becomes progressively less representative of production, reducing its value as a validation mechanism, which in turn makes it harder to justify the investment required to improve it. A digital twin, designed from first principles for simulation rather than static verification, can employ more efficient resource allocation strategies. It scales up to full capacity during active simulation periods when validation is actually occurring, then scales down to minimal footprint when idle. This elasticity makes comprehensive validation economically sustainable in ways that fixed staging infrastructure rarely achieves.

Where Digital Twins Are Already Delivering Value

The adoption of digital twin methodologies is accelerating fastest in organizations where the cost of failure is measured in millions of dollars per minute of downtime. Large-scale e-commerce platforms employ twins to simulate the systemic impact of recommendation algorithm changes before deploying them into the critical path of peak shopping periods. The ability to replay actual user sessions against candidate models while the production system continues serving live traffic allows engineering teams to measure not only technical metrics like latency and error rates but also business metrics like conversion impact and revenue per session. This creates a feedback loop that connects engineering decisions directly to business outcomes without exposing customers to experimental risk.

Financial services organizations have embraced digital twins for validating trading system behavior under volatile market conditions. Historical market data representing periods of extreme turbulence can be replayed against new trading logic to surface subtle logic errors that only manifest when order books move rapidly and liquidity becomes fragmented. The cost of discovering such errors in production is not merely technical. It involves regulatory scrutiny, reputational damage, and potentially significant financial liability. The twin provides a safe space to explore edge cases that cannot be safely manufactured in live markets.

In the machine learning and artificial intelligence domain, digital twins are becoming essential infrastructure for model validation and ongoing quality assurance. Models degrade over time as user behavior shifts and as the underlying data distributions that the models were trained against drift away from current reality. A twin allows teams to replay production traffic against candidate model versions while the current production model continues serving predictions. Engineers can compare outcomes side by side, measuring not only aggregate accuracy metrics but also performance characteristics like inference latency, resource consumption patterns, and failure rates under various load conditions. This capability is especially critical for generative AI systems where output quality can be subjective and where regressions are notoriously difficult to detect through automated testing alone.

The architectural patterns that enable these use cases have become significantly more accessible in recent years. Open source tools for traffic capture and replay have matured to the point where they can handle the volume and variety of modern API traffic without introducing unacceptable overhead. Cloud providers now offer managed services specifically designed for creating isolated simulation environments that can consume production traffic while maintaining appropriate security and privacy boundaries. What once required a dedicated team of infrastructure engineers working for months can now be implemented by a small group with the right expertise in weeks. The barrier to entry has lowered substantially, though meaningful adoption still requires organizational commitment and a willingness to rethink established validation practices.

The Hard Problems That Still Need Solving

Despite genuine progress in tooling and methodology, building a faithful digital twin remains a non-trivial engineering undertaking. The most difficult challenge centers on data. To simulate production with sufficient fidelity, the twin requires access to production data. But production data is messy, sensitive in ways that vary by industry and jurisdiction, and subject to an increasingly complex web of privacy regulations including GDPR, CCPA, and their global equivalents. Simply copying customer databases into a simulation environment is neither legally permissible nor operationally responsible.

The organizations that succeed with digital twins invest heavily in sophisticated data handling pipelines that balance simulation fidelity against privacy and security requirements. They develop tooling to scrub personally identifiable information while preserving the statistical properties and structural relationships that matter for system behavior. They generate synthetic data that mirrors the distributions and edge cases found in production without exposing any actual customer information. They implement strict access controls and audit trails that satisfy compliance requirements while enabling engineering teams to work productively. This work is difficult, ongoing, and absolutely essential. There are no shortcuts that do not eventually create unacceptable risk.

State management presents another category of challenge that becomes more acute as system complexity increases. Modern software systems are not stateless request processors. They depend on databases with complex schemas and years of accumulated data. They rely on caches that maintain hot subsets of information with precise invalidation semantics. They interact with message queues that buffer asynchronous work and maintain delivery guarantees. A digital twin that fails to account for these stateful components is not actually simulating production in any meaningful sense. It is running a partial model that will miss the very failure modes it was built to detect.

This requirement often means running a full replica of the data infrastructure that supports production workloads, which introduces significant operational complexity and cost. Teams must decide how frequently to refresh the twin's state from production to prevent excessive drift while managing the resource consumption associated with state synchronization. They must determine which stateful components are essential for faithful simulation and which can be mocked or approximated without undermining the twin's predictive value. These decisions require deep understanding of system architecture and a willingness to iterate as the system evolves and as the team learns which simulation strategies produce actionable signals versus noise.

The question of simulation scope also demands careful consideration. A perfect replica of production is neither possible nor desirable. The goal is to simulate enough of the system to catch meaningful failures without recreating every single dependency and integration point. Teams building digital twins must make deliberate, documented choices about which components to include with full fidelity, which to model with simplified behavior, and which to mock entirely. Getting this balance right requires judgment that only comes from experience and a feedback loop that connects simulation outcomes to actual production incidents. When a production failure occurs despite passing all twin validations, the team must examine whether the twin's scope was insufficient and adjust accordingly. This learning process never truly ends because the system itself never stops evolving.

The Trajectory of Production Validation

The way software organizations approach reliability and release confidence is undergoing a structural shift. The era of deploying on fixed schedules, validating against disconnected staging environments that bear only passing resemblance to production, and hoping that monitoring systems catch problems before customers do is drawing to a close. Users expect systems that are continuously available, consistently responsive, and never visibly broken. Meeting those expectations in the context of increasingly complex distributed architectures requires validation capabilities that traditional approaches simply cannot provide.

Digital twins represent a meaningful evolution in how engineering organizations think about the relationship between pre-production validation and production reality. They acknowledge an uncomfortable truth that staging environments were designed to obscure. Production is the only environment that actually matters. Instead of trying to approximate production with scaled-down infrastructure and sanitized data, digital twins embrace the complexity of real systems and build simulations that reflect that complexity with sufficient fidelity to surface failures before they affect users.

The tooling will continue improving. The cost will continue declining as cloud providers compete to offer managed simulation capabilities. The organizations adopting digital twins today are not merely implementing a new technology. They are developing organizational muscle memory around a fundamentally different approach to reliability engineering. They are learning to simulate before they release, to test against reality before reality tests them, and to build confidence based on evidence rather than hope.

And when that inevitable 3 AM alert finally arrives, triggering the familiar sequence of adrenaline and investigation, there is a meaningful difference in how prepared teams can be. The engineers who have been running a digital twin already know which recent change introduced the regression. They have already observed the failure mode in simulation and understand its root cause. They have already validated the fix against production traffic patterns. The incident becomes a confirmation rather than a discovery, and the time to resolution shrinks from hours to minutes. That is the promise that makes the investment in digital twin infrastructure worth making. Not the elimination of failure, because complex systems will always fail in surprising ways. But the transformation of failure from an unpredictable crisis into a manageable, understood, and rapidly resolvable event.

Comments (0)

No comments yet

Be the first to share your thoughts!

Post Your Comment Here: